Throughout our previous posts, we have been moving forward in our organisation’s transformation to DevOps. We have learnt how to develop the Value Stream Map for our value delivery process, and we have looked at different methodological tools to help us manage our process, including Lean Thinking and the Theory of Constraints. In addition, we have restructured our teams to focus them on being multifunctional and self-organised. Finally, we have analysed the set of tools that will help us carry out our mission.

Armed with all this information, this post will address a key element in the automation of our customer value delivery process. This key element is the pattern called the Deployment Pipeline.

The first step is to define what a deployment pipeline is. As always, I will share my personal view, referring to specific sources for a more exhaustive description. In this instance, the book Continuous Delivery. Reliable Software Releases Through Build, Test and Deployment Automation by Jez Humble and David Farley is a must-read. In fact, a third of the book is devoted to describing the Deployment Pipeline pattern.

From my point of view, a Deployment Pipeline is the visual and automated implementation of our Value Stream Map that incorporates automation components at those points that can be automated.

The key concepts of this brief description are visual and automation, but others such as improving feedback and increasing confidence in the process are also involved.

The following summarises the benefits obtained from using a deployment pipeline:

- Increased speed. With an automated process instead of a manual one, the speed of execution is much higher, resulting in reduced cycle times, a key indicator in DevOps.

- A repeatable process. By using automation, we end up with a process that will always run the same way and can be repeated in different environments.

- Increased confidence in the process. A deployment pipeline can be run multiple times a day, which increases confidence in the process.

- Improved feedback. If something goes wrong, the whole team detects it immediately and can (and must) correct it so that the pipeline is operational again.

- Convergence and consistency of environments. The automated provisioning and deployment process makes different environments more consistent over time.

- A single source of truth. When we use the version control server as the source of the pipeline, it becomes the source of information for the entire process.

Designing the Deployment Pipeline

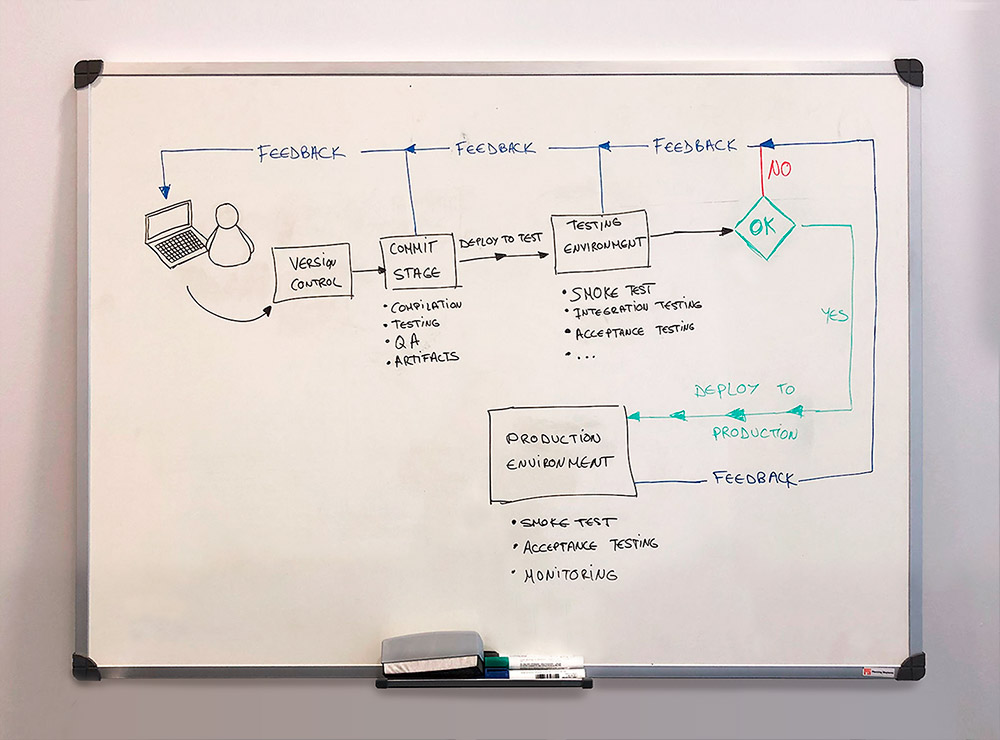

Now that we know what a deployment pipeline is and understand the benefits of using one, it is time to design it. To do this, we have to use our Value Stream Map and transpose it to the pipeline. We need to keep in mind that the Value Stream Map includes steps that cannot be automated, such as the generation of ideas, design or coding. In other words, our automated deployment pipeline begins from the moment the intellectual work is finished. This moment actually occurs when a team member commits their work to the version control server. This event triggers an instance of our deployment pipeline that will propagate along it.

At this first stage, known as the Commit Stage, several tasks are performed:

- Code compilation.

- Execution of unit tests.

- Code quality analysis: style, coverage, complexity.

- Artifact generation. If everything is correct, the artifacts will be generated and stored somewhere for use in subsequent stages.

This is where we have our first feedback point. If any of the above were to fail, the pipeline would stop, and the issue would have to be corrected. It is important to note that this stage must be very fast for the feedback to be effective.

Once we have completed the commit stage, we can move on to the deployment stage in a test environment and run the different test cycles. At this stage, we have to perform the following tasks:

- Deployment to the test environment of the artifacts generated in the commit stage. It is important that they are the same artifacts and are not rebuilt in each environment.

- Deployment smoke test. It is essential to do an initial test to verify that the deployment has worked correctly. If it has failed, it no longer makes sense to waste time on the subsequent tests.

- Automated execution of integration tests.

- Automated execution of acceptance tests.

- We can include manual exploratory testing here, or we can do this testing in a subsequent environment.

- We can also include non-functional testing, such as performance and security tests. Again, we can do this testing in a subsequent environment.

If at this point everything is correct, we can move on to the next stage in the pipeline. Keep in mind that this current stage takes more time, and we should take this into account when designing the pipeline. For example, if the acceptance tests take a long time, they could be run in parallel.

At this second feedback point, we are already confident that our code deploys successfully and runs according to specifications or acceptance testing requirements. We can repeat this process in as many environments we like, bearing in mind that as we progress along the pipeline, each subsequent environment must increasingly resemble the final production environment. Ideally, all environments should be identical to production, but this is not always possible.

Finally, if all tests in our test environments have been successful, we can start the production deployment stage. The deployment process must be identical to the one carried out in the previous environments. The tasks to be performed at this stage are the following:

- Deployment to the production environment.

- Deployment smoke test. Keep in mind that we need to be prepared to undo the change if something goes wrong.

- Automated execution of a subset of tests to establish the success of the release. A subset is used to speed up the detection of any errors.

- Manual validation of the deployment.

Basically, our deployment pipeline process would be as illustrated below:

Once the deployment pipeline has been designed, it needs to be implemented. I will take a deeper dive into this phase in my next blog post. Follow us on Twitter or LinkedIn so you don’t miss it.

Emiliano Sutil is a Project Manager at Xeridia